The Secret: I Don't Start With a Prompt

The Question I Get Every Day

"I used your exact prompt and my image looks nothing like yours. What am I doing wrong?"

You're not doing anything wrong. You're just missing the other 90% of the process.

The prompt you see on Civitai — the one attached to the final image — that's the last step of a pipeline that might have 5, 10, or even 15 steps before it. Copying that prompt is like reading the last page of a book and expecting to understand the whole story.

Let me show you what's actually happening behind every image I post.

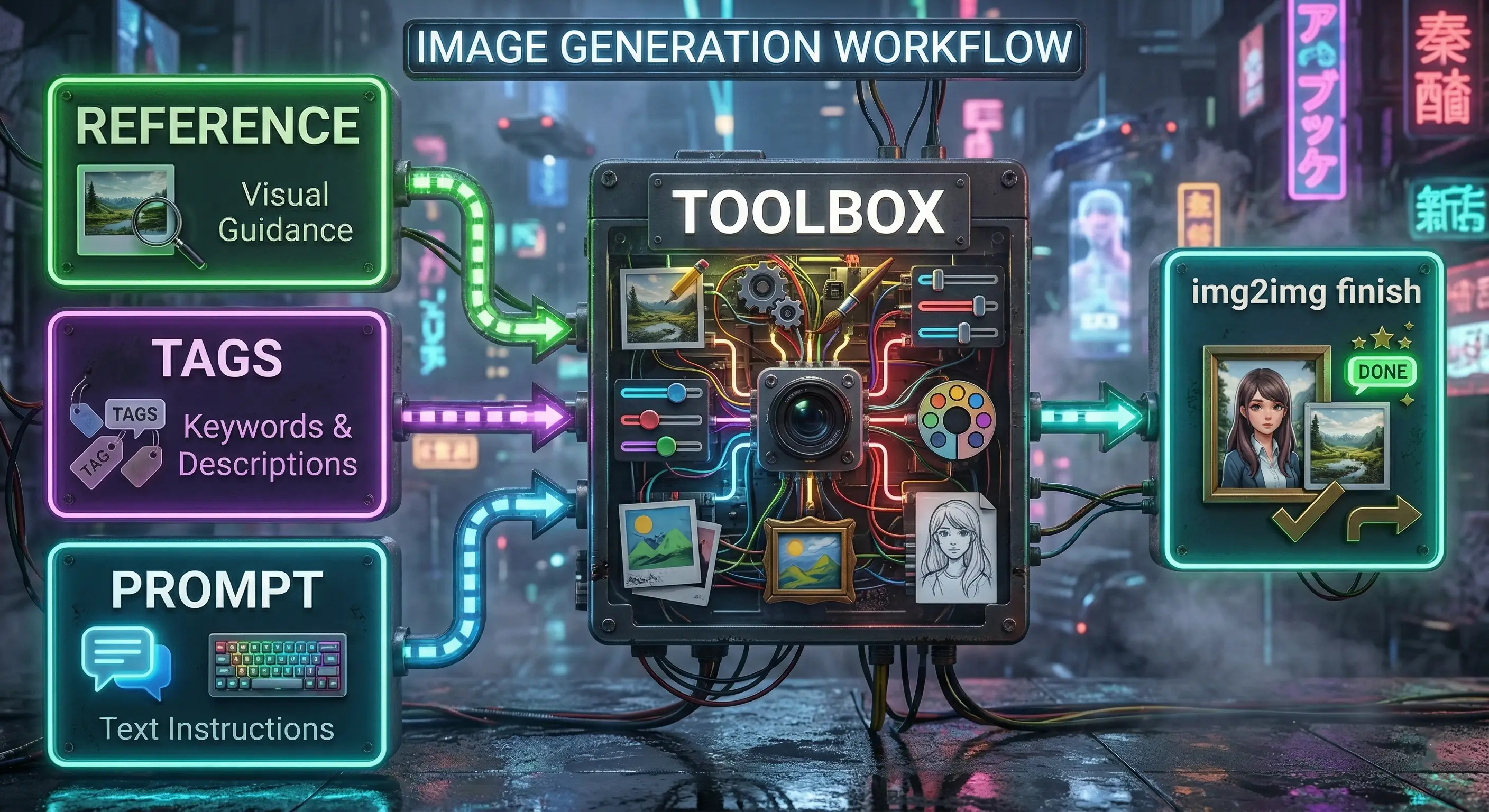

The Pipeline

Every image I make goes through a system. It's not the same steps every time — I mix and match depending on what I'm building — but the structure always looks like this:

Three possible starts. A flexible middle. One finish.

The Start: Three Entry Points

I pick ONE of these depending on what I'm making. They're not steps — they're different doors into the same building.

Starting from a Reference Image

I find something I like — anywhere on the internet — and use it as a visual starting point. Not to copy it, but to give the AI a foundation to build from.

This is img2img, and it's probably the source of most images people try to replicate from my prompts and can't. You're seeing the output of a process that started from an image you've never seen.

Starting from Extracted Tags

Instead of feeding the image directly to the AI, I run it through a tagger — an AI model that analyzes the image and extracts everything it sees. The pose, the lighting, the colors, the clothing, the mood, the style. All of it, broken down into tags.

Then I take those tags and feed them into a completely fresh text-to-image generation. The result looks nothing like the original, but it carries the DNA of what made the original work.

Starting from a Prompt

Sometimes I just start with an idea in my head. No reference. Pure txt2img.

Other times I'll grab the positive prompt and tags from someone else's image on Civitai — or anywhere the generation data is available — and use that as my starting point for txt2img. It's not about copying their image. It's about using their tags as a launching pad for something new.

This is what most people think I do 100% of the time. It's actually the least common starting point for my best work.

The Middle: The Toolbox

This is where it gets deep — and it's different every single time.

The middle isn't a recipe. It's a toolbox. I pull out different tools in different orders depending on what I'm making. Some images go through one step in the middle. Some go through ten.

Here's a sample of what might happen:

The quantity approach:

- Generate 200+ low-quality images from a recipe file

- Cherry-pick the 5 best compositions

- Feed those winners into the next stage

The variation approach:

- Take a prompt and run it through a splitter that breaks it into slots

- Each slot has multiple options — different poses, outfits, lighting, angles

- The system automatically generates every combination

- 8 poses x 6 outfits x 4 lighting setups = 192 unique images from one base concept

The refinement approach:

- Start with one carefully crafted high-quality generation

- Modify specific tags to push it in a new direction

- Add custom tags — combinations I came up with that don't exist in any training data

The hybrid approach:

- Combine any of the above

- Take batch results, extract tags from the best ones, feed those tags into a new round

- Layer strategies on top of each other

The middle can be 1 step or 15 steps. That's the point — it's flexible.

The End: Always img2img

No matter how I got here, every image finishes the same way: multiple rounds of img2img passes.

I take whatever came out of the middle — whether it's a rough batch pick or a carefully crafted generation — and I run it through several img2img refinement passes. Each pass tightens the details, fixes the composition, cleans up artifacts, and pushes the quality to where I want it.

The prompt you see on Civitai? That's from the final img2img pass. It's the finishing coat of paint, not the blueprint.

Why This Matters

When you copy my prompt and get a different result, you're copying the finishing step of a process that might have started from:

- A reference image you've never seen

- Tags that were extracted and recombined from that image

- Hundreds of batch variations that were filtered down to the best few

- Multiple rounds of refinement that built on all of the above

The prompt alone gets you in the neighborhood. The full pipeline gets you to the destination.

What You Can Do Right Now

I'm not telling you this to flex. I'm telling you because once you understand this, it changes how you approach image generation:

Stop thinking prompt-first. The prompt is one tool in the toolbox, not the whole toolbox. Most people spend hours tweaking a prompt when they should be changing their approach entirely.

Start collecting references. Build a folder of images that inspire you. They don't have to be AI-generated. Screenshots, photos, artwork — anything that has a quality you want to capture. This becomes your starting material.

Learn img2img. If you're only doing txt2img, you're working with one arm. img2img is what turns a good concept into a finished piece.

Think in passes, not one-shots. The best images are almost never generated in a single run. They're built up in layers, refined through multiple passes, and polished at the end.

Experiment with tag extraction. Take an image you love, pull the tags out of it, and use those tags as a starting point for something completely new. You'll be surprised how well this works.

Going Deeper

This article is the overview — the 10,000-foot view. I'm building detailed breakdowns of each part of the pipeline:

- Tag extraction: How I reverse-engineer the DNA of any image

- Batch generation: The quantity-first strategy and how to cherry-pick efficiently

- The recipe system: Generating hundreds of structured variations automatically

- The img2img finish: My multi-pass refinement process

- The complete workflow: All the pieces connected, start to finish

These deep dives are available for Prompt Insider and Full Workshop members.

If the overview already changed how you think about image generation, the detailed breakdowns will change how you actually work.